A client asks for a pentest report by Friday. They want enough detail to satisfy SOC 2, HIPAA, PCI DSS, or ISO 27001 reviewers, but they do not want to pay for a long manual engagement. That tension defines the job for MSPs and resellers.

AI pentest tools help you deliver faster reconnaissance, triage, retesting, and draft reporting without adding headcount every time demand spikes. Used well, they support affordable penetration testing offers, increase utilization, and give your team room to reserve senior testers for work clients will pay a premium for.

That said, tool selection is a business decision first.

You are not buying software just to find vulnerabilities. You are choosing whether a platform can fit your margins, support white-label delivery, onboard analysts without weeks of training, and sit beside expert manual pentesting instead of creating noisy reports that your team has to clean up later.

Vendor claims make this harder. Many platforms promise broad automation. Fewer explain where automation works, where it breaks, and where a human tester still needs to validate attack paths, write client-ready narratives, and catch business logic issues.

My recommendation is simple. Use AI pentest tools for speed, repeatability, and lower-cost service tiers. Keep manual pentesting in the stack for high-trust assessments, compliance reporting, and cases where false confidence will cost you the client. The tools in this list are judged through that MSP lens: price discipline, white-label potential, ease of rollout, and whether they help you build a service that is profitable to deliver.

Affordable Pentesting AI for autonomous MSP services

Affordable Pentesting AI is the automated testing platform from ai.affordablepentesting.com. It combines AI-powered automated pentests with expert-led manual testing from OSCP-certified hackers.

The appeal for MSPs is straightforward. You get results within a day for automated assessments, and manual pentests start within days. The platform tests external, internal, web app, cloud, API, and wireless attack surfaces. Findings map to SOC 2, HIPAA, PCI DSS, ISO 27001, NIST, and GDPR compliance frameworks, which means client reports are immediately relevant to their audit and certification needs.

Why MSPs like it

Pricing is one reason this stands out. Affordable Pentesting AI is positioned as the most affordable pentesting option on the market with no hidden fees. That matters when you are building a service tier that needs to stay profitable below $2K per engagement.

Key strengths for MSP delivery:

- Same-day turnaround: Automated tests run fast enough to fit into weekly scanning cycles or pre-renewal validation workflows.

- White-label ready: Reports can be delivered under your brand, making Affordable Pentesting AI a service extension rather than a visible vendor.

- Compliance-grade output: Findings already frame themselves against SOC 2, HIPAA, PCI DSS, ISO 27001, NIST, and GDPR, which shortens client compliance review.

- Paired with manual testers: When automated results need expert validation, you have access to certified testers through the same platform.

My recommendation

Use this if you need a low-cost, fast-cycle automated testing service that can sit alongside manual pentesting. The compliance mapping is a practical win for clients who are already under audit pressure. The lack of hidden fees also makes it easier to forecast your cost of goods.

Visit Affordable Pentesting AI to see current pricing and request a demo.

Vulnetic for autonomous AI hacking agents

Vulnetic uses an AI hacking agent called PTJunior to run fully autonomous reconnaissance, exploitation, and reporting. Unlike tools that assist analysts, Vulnetic runs end-to-end attacks without human intervention during the test.

It tests web applications, APIs, and Active Directory environments. The platform includes a real-time monitoring workspace for tracking test progress, queue management for batching multiple targets, and integrated reporting. The main claim: Vulnetic reduces pentest time from days to hours.

Why MSPs like it

The hands-off model appeals to teams that lack dedicated security analysts or need to scale testing across a large client base without hiring. Credit-based pricing means you pay for what you run, and the platform gives free credits at signup to test the model.

Standout features for MSP operations:

- Fully autonomous: No analyst babysitting required. Queue targets, let PTJunior run, get findings without intermediate review steps.

- Fast cycles: Hours instead of days frees up capacity for manual work on high-value engagements.

- Flexible pricing tiers: Free credits, pro, and enterprise plans let you scale without committing to annual contracts upfront.

- Integrated reporting: Findings come structured and ready to integrate into your own report template.

My recommendation

This is best for MSPs who need to scale automated testing volume without hiring analysts. Use it for routine Web and API assessments, retesting after fixes, and rapid cycle validation work. For complex attack chains or business logic flaws, pair it with manual testing.

Visit Vulnetic for current features and pricing.

Horizon3 ai NodeZero for recurring tests

If you want one of the most mature autonomous options in this category, start with NodeZero. It has executed over 170,000 tests in production environments as noted in StackHawk's review of AI pentesting tools. That matters because MSPs do not need theory. They need a tool that has already survived real client environments.

NodeZero is strongest when you need internal, external, cloud, and Active Directory validation with proof-of-exploit output. It is particularly useful for credential-based attack paths, lateral movement, and checking exposures against CISA KEV entries.

Where it helps your service desk

This platform is built for repeatability. If you offer recurring risk assessment, quarterly penetration testing, or white label pentesting add-ons, NodeZero can help you validate remediation faster and keep clients engaged between annual tests.

A few strengths stand out:

- Broad environment coverage: Internal, external, cloud, and directory-heavy estates.

- Proof-based reporting: Easier for clients to understand and prioritize.

- Partner relevance: It fits infrastructure and Active Directory audits, including phishing impact assessments.

The tradeoff is cost control. Asset-based subscriptions can get expensive as you scale across many tenants. You also still need human oversight for scope, safety, and interpretation.

My recommendation

Use NodeZero for production-safe recurring validation and remediation retests. Do not position it as your full replacement for manual pentest or pen testing services. It is an efficiency engine, not your final layer of assurance.

If your clients care about SOC 2, infrastructure hygiene, and ongoing exposure management, this tool gives you strong coverage. If they need nuanced business logic review, social engineering judgment, or auditor-friendly custom narratives, bring in certified humans after the automated run.

Visit Horizon3.ai NodeZero.

XBOW for validated exploit discovery

XBOW is an autonomous offensive security platform that raised $120M in Series C funding in March 2026. Its core claim: only report findings that are actually exploitable, not theoretical vulnerabilities.

The platform has been validated on HackerOne, where it has discovered real exploitable vulnerabilities accepted by program managers. In March 2026, XBOW integrated with Microsoft Security Copilot and Microsoft Sentinel, making it part of Microsoft's broader security automation stack. Current scope covers web application and API pentesting, with mobile testing coming in 2026.

Why MSPs like it

Signal quality is the main win. If XBOW reports a finding, it is exploitable in the tested environment. That means fewer false positives for your clients to dismiss and less analyst time validating whether something is actually a risk.

Key strengths for MSP delivery:

- Validated exploitability: Findings are confirmed exploitable, not theoretical. Clients see real risk, not noise.

- Microsoft alignment: Integration with Copilot and Sentinel makes this a natural fit for teams running Microsoft security stacks.

- Enterprise backing: $120M Series C signals stable funding and long-term development roadmap.

- Clean reporting: High-signal findings reduce post-test review work and increase client trust in your testing quality.

My recommendation

Use XBOW for web and API assessments where you want to minimize false positives and maximize confidence in reported findings. The exploitability validation means clients are less likely to push back on results. Be aware that scope is currently limited to web and APIs; mobile comes later in 2026.

Visit XBOW to learn more about current capabilities.

Pentera for enterprise validation programs

Pentera is not the tool I would lead with for every small MSP client. It is the tool I would pitch when the buyer wants an enterprise validation program and has the budget to support it.

Its value is clear. Pentera focuses on continuous, production-safe security validation with AI-assisted analysis and reporting. For larger accounts, that can support a stronger recurring revenue model than one-off annual penetration testing projects.

Where Pentera fits

This platform works well when your client wants security validation at scale across controls and external exposure, not just a single point-in-time pen test. It is also useful when the buyer is compliance-minded and wants a broader validation story around resilience and ongoing exposure.

If you want a broader MSP view of where this model fits, review AI pentesting for MSPs.

Pros are straightforward:

- Enterprise-friendly workflow: Built for ongoing validation, not only annual reports.

- Production-safe posture: Better fit for cautious security teams.

- Strong for continuous programs: Good option for larger environments with repeat testing needs.

The limitation is just as important. Pentera validates controls well, but it may still need manual pentesting beside it for full application, business logic, and custom compliance depth.

Pentera is a business tool first. Sell it when the client wants continuous assurance and has internal maturity. Do not force-fit it into price-sensitive SMB scopes.

Recommendation for MSP profitability

Use Pentera when you are building a higher-ticket managed security validation offering. It can help increase account value and keep larger clients engaged over time. For small and mid-market clients that mainly need affordable penetration testing and quick turnaround, it can be more platform than they need.

Visit Pentera.

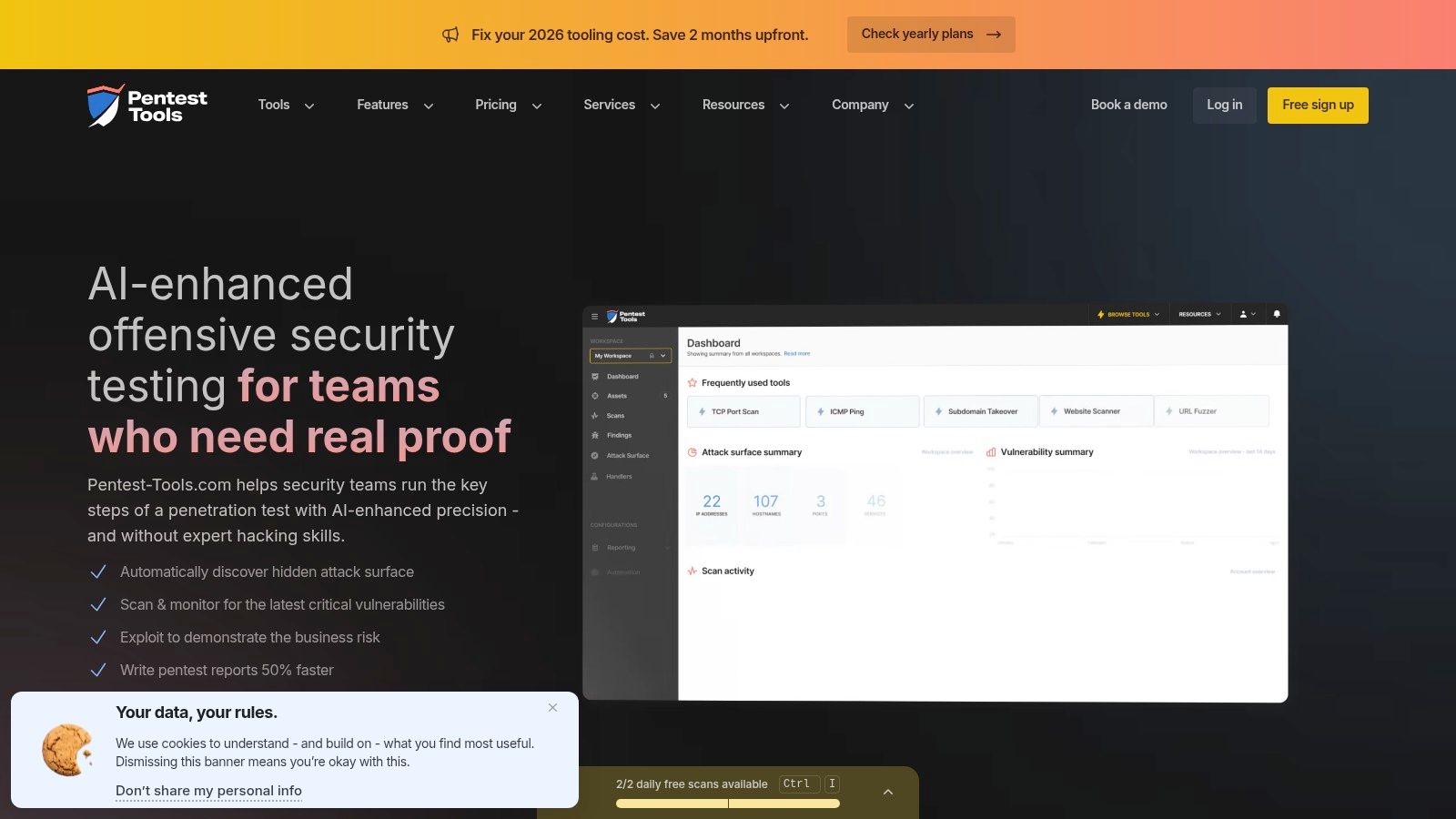

Pentest Tools dot com for fast onboarding

Some tools are powerful but heavy. Pentest-Tools.com is practical. That matters if you need junior analysts, compliance consultants, or vCISO staff to contribute without a long ramp-up.

Its cloud-based platform covers web, network, API, and cloud workflows. The part I like most is its Model Context Protocol server, which lets AI assistants run scans, fetch findings, and help with reporting under human approval gates. That control layer is useful for MSP operations where sloppy automation can create client-facing mistakes.

Why it works for resellers

The service is easier to trial than most enterprise platforms. That makes it useful if you want to package AI-assisted pen test support without a huge buying decision upfront.

It also aligns well with teams that want to standardize automated steps before handing work to senior testers. This is especially relevant if you already educate clients on the differences around automated pen testing.

A few business advantages:

- Fast trial path: Helpful for MSPs testing a new service line.

- Approval gates: Keeps humans in control of what gets executed and reported.

- Broad scan coverage: Useful for mixed client environments.

The tradeoff is that findings still need expert review. Also, cloud-only delivery may not fit every regulated client.

Best use case

I recommend this platform for MSPs building a repeatable mid-market offering. It is a good middle ground between basic scanning and expensive autonomous platforms. You can use it for triage, reporting support, and recurring technical checks, then overlay manual pentesting for high-value accounts or compliance-sensitive work.

Visit Pentest-Tools.com.

Microsoft PyRIT for AI app testing

If your clients are shipping chatbots, RAG systems, copilots, or internal AI assistants, traditional penetration testing alone is not enough. You need AI red teaming, and PyRIT is one of the better open-source starting points.

This is not a push-button scanner. It is a framework for automating adversarial prompts and evaluations across multiple attack categories. That makes it useful for teams that want to test LLM behavior in a structured way.

Where PyRIT earns its place

PyRIT is best for MSPs or resellers that already have technical depth and want to expand into AI security assessments without locking into a single vendor right away. Microsoft's documentation and examples also help when you need a recognizable reference point for enterprise buyers.

If your team is comparing frameworks for automation-heavy work, this piece on automated penetration testing software is a relevant companion.

Here is the honest assessment:

- Best for: AI application assessments, chatbot abuse testing, prompt injection testing.

- Not best for: Traditional network pentest or one-click compliance work.

- Good fit: Teams comfortable with Python and custom workflows.

Recommendation

Use PyRIT if you want to add a new service around LLM and agent security testing. It helps you move beyond generic infrastructure pentesting and into a newer advisory lane where many MSPs still have limited competition.

Do not sell it as a turnkey product. Sell it as part of a specialized human-led assessment for AI-enabled applications.

Visit Microsoft PyRIT.

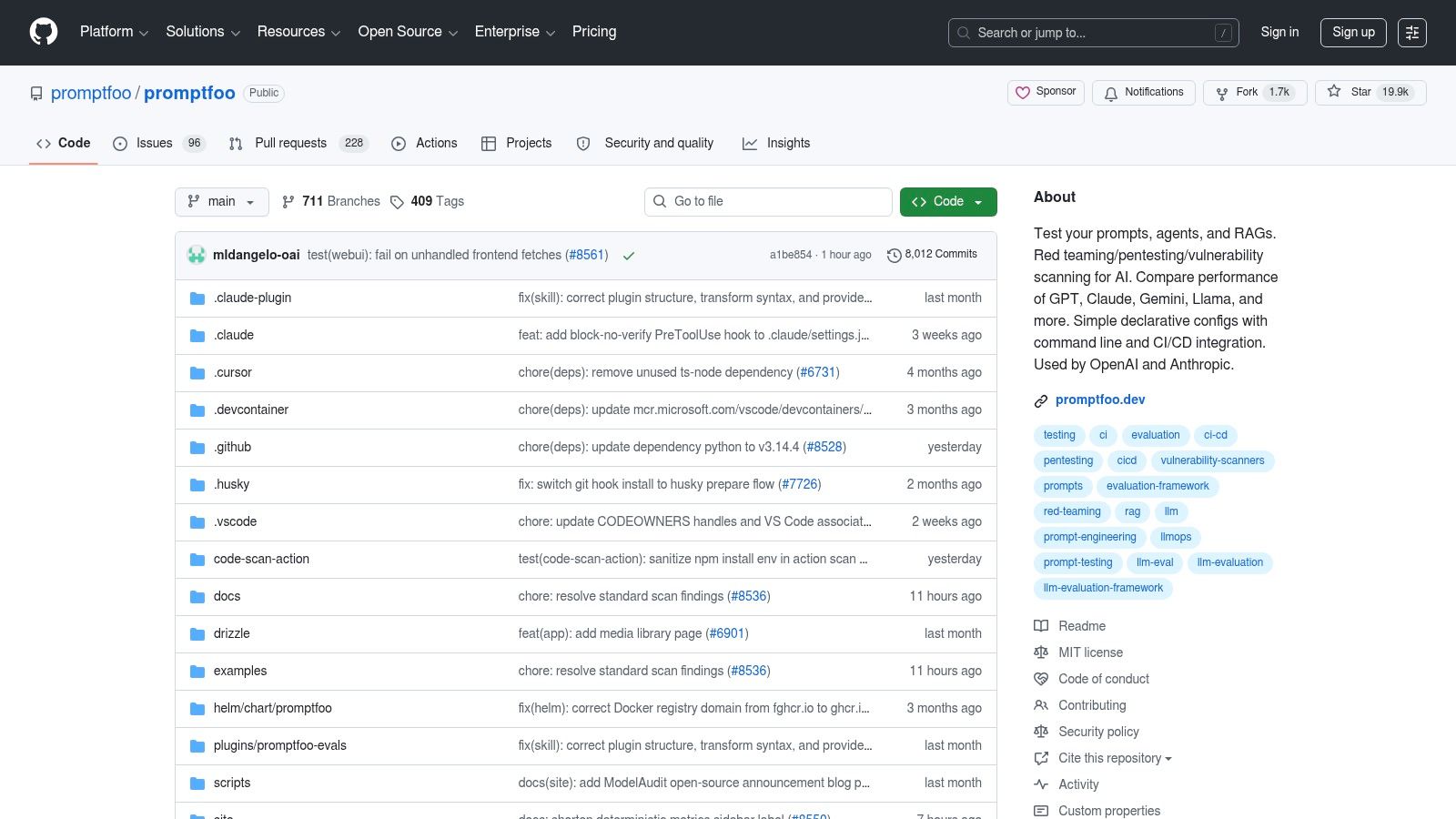

Promptfoo for CI driven AI checks

Promptfoo belongs in the stack when your client builds AI features quickly and needs continuous testing in CI/CD, not a one-time review every quarter.

It is an open-source framework for testing prompts, agents, and AI behaviors with regression-style checks. For a vCISO or MSP supporting SaaS companies, that is useful because security problems in AI apps often show up after changes, not just at launch.

What makes it practical

Promptfoo is CLI-friendly and pipeline-friendly. Teams can wire it into build processes and run repeatable tests as models, prompts, or integrations change.

That gives you a clean service opportunity. You can help clients stand up baseline AI security checks as part of broader risk assessment and governance work, then escalate to manual penetration testing when a workflow looks high risk.

Here is a simple approach:

- Use Promptfoo for repeatable AI behavior checks.

- Use manual pentesting for nuanced exploitation and business impact validation.

- Use both if your client is serious about AI governance.

The weakness is clear too. Promptfoo is only as good as the tests you design. Weak test suites produce weak assurance.

Promptfoo is a great retention tool. Once you help a client embed AI security checks into their release cycle, you become harder to replace.

Best fit

I recommend Promptfoo for developer-centric clients and MSPs with DevSecOps-adjacent services. It is less useful if your book of business is mostly traditional SMB infrastructure with no AI product layer.

Visit Promptfoo.

Giskard for conversational agent risk

Giskard is narrowly focused, and that is a good thing. It is built for continuous red teaming of conversational AI agents through black-box testing over API endpoints.

If you support clients rolling out customer support bots, internal assistants, or AI agents tied to company data, this category deserves attention. These systems create governance risk fast, especially when the client is chasing SOC 2 readiness or broader AI oversight.

Business value for MSPs

Giskard can help you audit deployed agents for issues like hallucination and data leakage, while also supporting ongoing monitoring and reporting. That is useful for firms that sell both security and compliance guidance.

Its biggest strength is low friction. You do not need to turn it into a general web app scanner. You use it where it fits.

- Strong for: Conversational AI security checks and governance narratives.

- Useful for: Clients asking how to validate AI agents before or after launch.

- Weak for: General infrastructure, API, or mobile penetration testing.

The limitation is straightforward. This is not your all-purpose pentest engine. It solves a narrower problem.

Recommendation

Use Giskard to create a specialized AI assessment offer for clients deploying agents. Pair it with manual human review for impact analysis and executive reporting. That combination is more credible than a pure tool sale and easier to white-label under your own advisory brand.

Visit Giskard Continuous Red Teaming.

Lakera for testing plus runtime defense

Lakera stands out because it combines pre-deployment AI red teaming with runtime protection. That is useful for MSPs that do not want to stop at the report. They want to stay attached to the client after launch.

Lakera Red handles automated AI red teaming. Lakera Guard focuses on runtime guardrails such as blocking prompt injection and jailbreak-style abuse. That gives you a broader lifecycle story.

Why that helps retention

A one-time penetration test is useful, but recurring protection keeps your firm in the account. Lakera supports that motion better than pure testing-only tools because it bridges assessment and operational defense.

The business case is simple:

- Before deployment: Test the model and agent behavior.

- After deployment: Enforce guardrails in production.

- For the MSP: Stay involved beyond the initial assessment.

The limitation is fit. This is AI application security, not general infrastructure pentesting. If your client mostly needs external network, internal AD, mobile, or cloud penetration testing, use another platform.

Recommendation

Lakera makes sense when you are supporting clients that have already committed to AI productization and want both validation and runtime protection. It is especially helpful if you want to grow recurring advisory and managed service revenue around AI governance.

Visit Lakera.

Top 10 AI Pentest Tools: Feature & Capability Comparison

SolutionPrimary focus / use caseKey featuresStrengthsLimitationsPricing & deploymentAffordable Pentesting AIAutonomous pentests + certified testers; MSP service deliveryAI-powered automated tests; day-of-turnaround; SOC 2/HIPAA/PCI DSS mapping; white-labelMost affordable pricing; compliance-ready output; paired manual testingDependent on manual testers for complex logicCredit-based; transparent pricing; contact sales for enterprise volumeVulneticAutonomous reconnaissance + exploitation for web, API, ADPTJunior AI agent; real-time workspace; queue mgmt; fully autonomousFully hands-off testing; hours instead of days; flexible creditsFocused on web/API/AD only; minimal analyst control over methodCredit-based pricing; free signup credits; pro & enterprise tiersHorizon3.ai NodeZeroAutonomous pentesting for infra, AD, cloud; MSP recurring testsAutonomous attack chains; "Quick Verify" retest; MCP Server integrationsMature feature set; AWS Marketplace pricing clarity; MSP-friendly workflowsAsset-based costs at scale; needs human oversightCommercial SaaS; marketplace packages; subscription pricingXBOWAutonomous offensive security for web apps, APIs (mobile 2026)Validated exploit discovery; Microsoft Copilot & Sentinel integration; $120M fundingOnly reports exploitable findings; Microsoft ecosystem fit; high signal qualityWeb/API only (mobile coming 2026); newly integratedContact sales for pricingPentera PlatformContinuous security validation & compliance-oriented testingAI web attack testing; production-safe control validation; compliance contentEnterprise-grade safety; strong automated validation; continuous coverageEnterprise sales motion; complements manual testingEnterprise licensing; contact sales (SaaS/on-prem options)Pentest-Tools.comCloud PTaaS toolkit with AI-assisted workflowsMCP server for AI assistants; broad scans (web, network, API, cloud); Pentest RobotsClear plans + free trial; white-label options; good for junior analystsAutomated outputs need expert review; cloud-onlyTransparent subscription tiers; SaaS; free tier availableMicrosoft PyRITAI/LLM red teaming automation and labsAdversarial prompt automation; evaluation labs; MS guidance/examplesFree and well-documented; strong MS referencesEngineering effort required; not push-buttonOpen-source (GitHub); self-hosted; freePromptfooPrompt & agent testing in CI/CD (continuous model testing)CLI + YAML workflows; multi-model support; CI/CD integrationEasy GitHub Actions integration; active community recipesRequires crafted test suites; not one-clickOpen-source; CI/CD integration; self-hostedGiskard Continuous Red TeamingBlack-box continuous red‑teaming for conversational agentsAPI agent testing; automated test generation; monitoring/reportingLow integration friction; governance and SOC2 alignmentFocused on conversational agents only; sales engagement for pricingCommercial SaaS; contact salesLakera (Red + Guard)Pre-deploy red teaming + runtime guardrails for LLMsAutomated red teaming; runtime enforcement; APIs/docsCombines testing + runtime protection; backed by Check PointCommercial; focused on AI apps rather than infraCommercial enterprise; contact sales

Final Thoughts

A client asks for an annual pentest, a quarterly validation cycle, and proof their new AI feature will not create a compliance problem. If you answer with one tool, you lose control of delivery, pricing, and client expectations.

Use a service strategy instead.

Keep three jobs separate. Use AI pentest tools for speed and repeatable coverage. Use manual pentesting for judgment, validation, and report quality. Package both into a white-label offer that looks consistent to the client and stays profitable for your team.

MSPs and resellers get into trouble when they blur those lines. Automation starts passing as a full pentest. Reports fill up with weak findings. Clients stop seeing why expert testing costs more than a scan, and your margin gets squeezed.

Match the tool to the business problem. Affordable Pentesting AI, Vulnetic, and XBOW fit autonomous testing for MSP service delivery. NodeZero and Pentera fit recurring infrastructure validation. Pentest-Tools.com fits lower-cost scanning and pre-assessment work. PyRIT, Promptfoo, Giskard, and Lakera fit clients building or deploying AI features that need dedicated testing.

Feature lists matter less than delivery fit. Before you choose a stack, ask:

- Will this make delivery faster without hurting report quality?

- Can we present it cleanly under our own brand for MSP and reseller clients?

- Does it remove low-value analyst work instead of adding review overhead?

- Will it help start compliance conversations around SOC 2, HIPAA, PCI DSS, or ISO 27001?

- Do certified testers still review findings before anything reaches the client?

Human review is still required. AI tools are good at speed, repetition, and retesting. They are weaker at business logic, environment-specific context, and final client-safe reporting. The Penligent analysis of pentest AI tools in 2026 makes that gap clear, especially for MSPs trying to sell a white-labeled service without lowering trust.

My recommendation is simple. Lead with automation where it improves turnaround and protects margin. Finish with experienced pentesters where accuracy, certification, and client confidence decide whether the deal renews.

That model also fits the direction of the pentesting market, as noted earlier. More vendors will enter. More buyers will struggle to tell the difference between scanning and real validation. The MSPs that win will have a clear delivery model, not the biggest tool stack.

Use this framework:

- Automation first for discovery, repetitive checks, retesting, and scale

- Manual pentesting for proof, judgment, and compliance-grade reporting

- White-label delivery so clients see one accountable partner

For many partners, that means mixing AI tooling with a channel-only pentest provider like MSP Pentesting. It offers white-labeled manual tests that complement automated tools, with certified pentesters and partner-friendly reporting.

Pick tools that make your team faster. Keep people involved where mistakes cost renewals, margin, or trust. That is how you turn AI pentest tools into a service that clients keep buying.

.avif)

.png)

.png)

.png)