Most MSPs are in the same spot right now. Clients want stronger security controls, cleaner compliance evidence, and better answers during risk assessment conversations, but they don't want enterprise-tool pricing or months of deployment pain.

That's where the suricata intrusion detection system fits. It gives MSPs, vCISOs, GRC firms, and resellers a practical way to add serious network visibility without forcing a giant licensing discussion first. It also works well beside pentest, pen testing, penetration test, and penetration testing services because it helps validate findings, monitor exposure over time, and turn one-time assessments into ongoing security value.

What Is the Suricata Intrusion Detection System

Suricata is an open-source engine used for intrusion detection, intrusion prevention, and network security monitoring. For an MSP, that matters because one tool can help watch network traffic, flag suspicious behavior, and generate logs that are useful for both operations and compliance.

It isn't just another alert box. Suricata started in 2008 and was built with native multi-threading, which set it apart from older single-threaded IDS tools and made it better suited for modern traffic loads (Snort vs Suricata performance analysis). That design choice is a big reason security teams still rely on it in real environments.

Why MSPs care about it

MSPs usually need tools that do three things well:

- Stay affordable: Open-source matters when you're trying to protect margins and still deliver strong security services.

- Support compliance work: Good network evidence helps with SOC 2, HIPAA, PCI DSS, and ISO 27001 conversations.

- Fit beside manual work: A manual pentesting engagement finds issues at a point in time. Suricata helps monitor for related activity before and after the report.

Practical rule: If a tool helps your SOC workflow and your audit workflow at the same time, it usually earns its place.

For channel partners and resellers, that's useful. You can improve monitoring maturity, strengthen client reporting, and support white label pentesting follow-up without buying into a heavyweight platform that blows up your delivery cost.

How Suricata's Architecture and Capabilities Work

A client gets hit with a suspicious outbound connection at 2:00 a.m. The firewall allows it, the endpoint agent stays quiet, and the only way to explain what happened is to inspect the network traffic with enough context to separate noise from real risk. That is where Suricata earns its keep for MSPs.

It processes traffic fast, inspects it at multiple layers, and produces evidence your SOC can act on. For a channel partner, that matters because the same sensor can support managed detection, incident review, post-remediation validation, and parts of a threat and vulnerability management program without adding major licensing cost.

The three jobs Suricata can handle

Suricata can run in different roles based on the client's tolerance for risk, operational maturity, and change-control limits.

| Mode | What it does | Best use for MSPs |

|---|---|---|

| IDS | Watches traffic and alerts | A low-friction starting point for managed monitoring |

| IPS | Sits inline and can block traffic | Better for mature clients that want active prevention and can test policy changes |

| NSM | Captures network metadata and transaction details for investigation | Useful for SOC workflows, incident timelines, and audit evidence |

Most MSPs should start with IDS or NSM unless the client has the staff and process discipline to support inline blocking. IPS can reduce exposure, but a bad rule in the wrong place can interrupt business traffic. That trade-off needs to be deliberate.

What the engine is doing under the hood

Suricata uses a multi-threaded inspection pipeline, which lets it spread packet capture, decoding, stream handling, detection, and output work across available CPU cores. In practice, that makes it a better fit for busy edge links, east-west traffic in virtual environments, and multi-tenant monitoring where one slow process can become a bottleneck.

It also does more than basic signature matching. Suricata performs protocol-aware inspection, rebuilds sessions, and handles traffic conditions that often break weaker sensors, such as fragmented packets and out-of-order TCP streams. The Suricata project outlines those capabilities in its feature overview.

That matters during real investigations. Attackers and red teams do not always generate neat, textbook traffic. They tunnel over allowed protocols, split payloads across packets, or rely on session oddities to avoid shallow inspection. Suricata is designed to keep enough state to catch more of that behavior.

A practical way to look at the workflow is this:

- Packet acquisition: Pulls traffic from the interface or capture method you configure.

- Decode and normalization: Interprets packet structure and prepares traffic for inspection.

- Flow and stream tracking: Reassembles conversations so detections apply to actual sessions, not isolated packets.

- Protocol parsing: Understands application protocols well enough to inspect useful fields and transactions.

- Detection and logging: Applies rules, records events, and exports data analysts can search and correlate.

Why the architecture matters to MSP delivery

Good architecture lowers operating cost.

If a sensor drops visibility under load, the client still pays for monitoring, but your team gets weaker evidence, noisier investigations, and harder post-incident reporting. Suricata's design helps avoid that failure mode, especially when it is tuned to the hardware and capture method instead of deployed with defaults and forgotten.

It also fits well with modern logging pipelines. Suricata can generate structured output that feeds SIEMs, data lakes, and log analytics frameworks like Flink, which gives MSPs options for white-label reporting without buying a large proprietary stack first.

For compliance-driven clients, that flexibility is useful. For pentesting follow-up, it is even better. After a white-label assessment finds weak segmentation, risky protocols, or suspicious outbound paths, Suricata can help confirm whether those patterns still exist in production traffic and whether remediation changed the environment.

That is the business case. MSPs get a high-performance network sensor that can support SOC work, compliance evidence, and pentest validation from one platform, while keeping margins intact.

Managing Rules and Logs for Better Insights

A Suricata deployment is only as useful as its rules and logs. The engine gives you horsepower, but the ruleset tells it what to care about, and the logs tell your team what happened.

For MSPs, Suricata becomes more than an IDS. It becomes operational data you can feed into a SIEM, triage in a SOC, and use in a compliance review without spending hours translating raw packet captures.

Rules are the detection brain

Organizations often begin with community or vendor-supported rules and then tune from there. That's the right move. A default ruleset gives coverage fast, but it won't reflect a client's exact applications, cloud architecture, or acceptable network behavior.

What works in practice is simple:

- Start broad, then tune down: Get visibility first.

- Add custom logic for known risks: Especially after a penetration test or risk assessment.

- Disable noisy detections that create repeat false positives: Analysts stop trusting noisy tools.

The wrong approach is loading everything and calling it done. That creates alert fatigue fast, especially in busy MSP environments.

Why EVE JSON matters

Since 2014, Suricata has evolved into a broader Network Security Monitoring platform by placing rich protocol metadata directly into its JSON alerts, allowing single events to carry details such as anomalous logins, data exfiltration attempts, and transaction logs that feed directly into SIEMs for real-time analysis (Huntress overview of Suricata).

That's a big deal because EVE JSON acts like a universal translator. Your SIEM doesn't have to guess what the alert means. The event already includes structure and context.

What good logging looks like

A strong MSP deployment usually focuses on these outputs:

- Alerts: Clear indicators that a rule matched.

- Transactions: Useful for understanding what happened inside a session.

- Files: Important when file movement matters to the investigation.

- PCAP references: Helpful when deeper packet review is needed.

If you're building bigger pipelines around that data, it's worth looking at log analytics frameworks like Flink to understand how teams process high-volume event streams without turning every log source into a bottleneck.

Rich logs are only useful if your team can search, correlate, and explain them to a client.

That's why structured output matters so much for GRC and vCISO work. It helps bridge the gap between analyst detail and executive reporting. It also ties neatly into adjacent service areas like threat and vulnerability management, where detection data and vulnerability context need to support the same story.

Choosing Your Suricata Deployment and Tuning Strategy

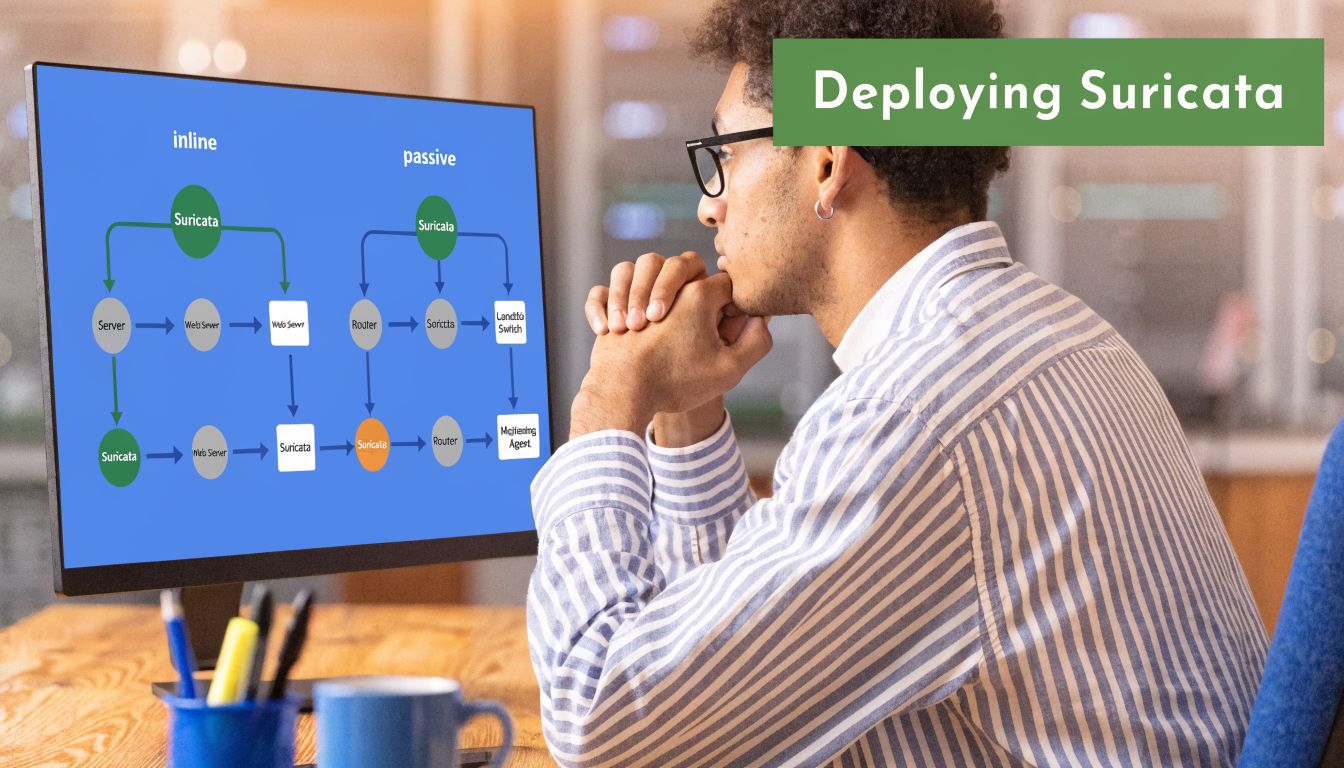

A client asks for better monitoring after a phishing incident, but they do not want anything in the production path that could interrupt business traffic. That is the point where deployment strategy matters. Suricata can sit off to the side and watch, or it can sit inline and enforce. The right choice depends on the client's risk tolerance, change window discipline, and your team's ability to tune policies without creating noise.

Passive IDS versus inline IPS

Passive IDS works like a security camera. It sees the traffic, records what matters, and alerts your team. Inline IPS works like a guard at the door. It can stop traffic, but it also becomes part of the client's availability risk.

| Deployment | Strength | Trade-off |

|---|---|---|

| Passive IDS | Lower operational risk, easier rollout | It won't block traffic |

| Inline IPS | Can actively stop malicious traffic | Higher complexity and more tuning required |

For MSPs, passive mode is usually the better first step. It gives you fast visibility, cleaner onboarding, and fewer change control objections from regulated clients. It also fits white-label monitoring services well because you can start producing useful security findings before you ask the client to trust an enforcement point.

Inline mode has a place. I recommend it after you understand normal traffic, have a process for rule exceptions, and know who approves emergency changes if a blocking policy catches business traffic. Without that discipline, an IPS can create support load that wipes out the value of the control.

Tuning for performance without overspending

Suricata is attractive to channel partners because it scales well on modest hardware if you deploy it carefully. Earlier testing cited in this article showed strong packet processing performance compared with Snort under heavier ruleset conditions, especially as CPU cores increased. The practical takeaway is simple. MSPs can get useful inspection depth without buying premium appliances for every client site.

That cost profile matters. It gives you room to package detection as a recurring service, reserve spend for storage and SIEM retention where it matters, and still support smaller clients that need evidence of monitoring for board reviews and audit prep.

A tuning plan that works in the field usually includes:

- Choose the right capture method: AF_PACKET is a common fit for Linux sensors and often a sensible starting point.

- Pin workers to CPU cores: CPU affinity helps keep packet handling stable under load.

- Trim the ruleset by client type: A healthcare clinic, law firm, and SaaS company should not run the same alert logic.

- Baseline before blocking: Start in alert-only mode, review false positives, then decide what should move to prevention.

- Watch sensor placement: North-south traffic is only part of the story. East-west visibility often matters more once an attacker gets inside.

Placement is where many deployments succeed or fail. A sensor at the internet edge will catch external scans and obvious command-and-control traffic, but it may miss lateral movement between VLANs, cloud workloads, or remote access paths. A formal network architecture review for security monitoring and sensor placement helps identify the choke points that produce the best signal for the least operational effort.

For MSPs that also advise on governance, the deployment decision supports more than detection. It affects incident response quality, control validation, and how confidently you can explain monitoring coverage to a client executive. That lines up well with broader Virtual CISO Services work, where architecture choices need to support both security operations and audit-facing documentation.

A noisy IPS burns trust fast. A well-placed, well-tuned IDS usually gives an MSP enough signal to prove value first, then expand into stronger enforcement where it makes business sense.

Using Suricata for Compliance and SOC Workflows

A lot of teams treat IDS tooling as purely operational. That's too narrow. Suricata is also useful as an evidence source.

For a vCISO, GRC advisor, or audit-facing MSP, that matters because clients don't just need security controls. They need records showing those controls are active, reviewed, and tied to a repeatable process.

Why auditors care about network evidence

Frameworks like SOC 2, HIPAA, PCI DSS, and ISO 27001 all push organizations toward demonstrable monitoring, incident handling, and control validation. Suricata helps by generating structured events that can show attempted access, policy violations, suspicious transactions, and investigation context.

That doesn't mean Suricata alone makes a client compliant. It means it can support the evidence trail.

A mature workflow usually includes:

- SIEM ingestion: Pull Suricata events into platforms your team already monitors.

- Use-case dashboards: Unauthorized access attempts, suspicious outbound traffic, or high-risk protocol activity.

- Retention and review: Logs need ownership and regular review, not just storage.

- Exception handling: When alerts are expected, document why.

How it fits into SOC operations

SOC teams need speed and context. Compliance teams need traceability. Suricata can serve both if the outputs are routed correctly and the rules are tuned to the client's environment.

Here's where many MSPs miss the mark. They deploy the sensor, forward the logs, and stop there. That creates data, not a workflow.

A better model is to connect Suricata events to:

| Workflow | Example outcome |

|---|---|

| Detection triage | Analysts review suspicious sessions with more context |

| Compliance review | Teams show monitoring activity during audits |

| Incident response | Investigators pivot from an alert into related transactions |

| Client reporting | MSPs summarize security posture in plain language |

If you package this inside broader managed detection and response (MDR) services, Suricata becomes one of the practical telemetry sources that makes the service credible. It isn't the whole stack, but it can be a very effective part of it.

Good compliance evidence is boring on purpose. It's organized, repeatable, and easy to explain.

That's exactly why structured network monitoring earns its keep. It helps the SOC operate better and gives the compliance side something concrete to stand on during a review, audit, or board conversation.

How MSPs Can Leverage Suricata for Pentesting

Suricata becomes much more valuable when you stop treating it as a standalone security product and start using it as a companion to pentesting, pen testing, and penetration testing services.

A penetration test gives you a focused view of exploitable weaknesses. Suricata helps monitor the network for related activity before remediation is complete, after controls are changed, and during later validation checks. That turns a point-in-time engagement into an ongoing security feedback loop.

Where Suricata helps after a pentest

Say a manual pentest identifies a risky exposed service, weak segmentation path, or application behavior that should never appear on the wire. Suricata can then be tuned to watch for signs of that activity in production traffic.

That's useful for MSPs offering white label pentesting through certified partners because it helps answer the question clients always ask next. "How do we know if someone tries this again?"

A practical workflow looks like this:

- Run the penetration test and identify meaningful exposure.

- Map findings to observable network behavior that can be watched.

- Tune Suricata rules and logging around those indicators.

- Review activity during remediation and after controls change.

- Report back in business terms the client understands.

The trade-offs MSPs need to manage

This only works if the deployment is tuned. A common challenge for MSPs using Suricata is resource optimization and alert fatigue, especially during red team simulations or cloud pentests. The same source notes that untuned rules can lead to high CPU usage and a flood of alerts, while newer features like live rule reloads and conditional PCAP can cut storage costs by up to 60% for this kind of validation workflow (Suricata MSP tuning discussion).

That's the trade-off. Suricata is affordable, but it's not magic. If you dump in every rule, ignore tuning, and collect everything forever, you'll create noise and overhead.

What works and what doesn't

What works

- Targeted custom rules after a manual pentesting engagement

- Short feedback cycles between the pentest team and the monitoring team

- Focused PCAP collection tied to investigations

- Client-specific tuning based on environment and compliance needs

What doesn't

- Treating every alert like an incident

- Using stock rules without local context

- Skipping review of noisy detections

- Assuming a sensor replaces human-led penetration testing

For MSPs that want a tighter service loop, pairing Suricata with a scoped network penetration testing service creates a strong model. The pentest finds the weakness. Suricata helps watch for attempted abuse and supports later validation.

Visibility is helpful. Validated visibility tied to a real finding is where clients start seeing value.

That's especially important when you're trying to stay affordable. A well-run combination of manual pentesting, targeted monitoring, and clear reporting can deliver more practical security value than a bloated stack full of tools nobody has time to tune.

Partner With Us for Affordable Pentesting

Suricata gives MSPs a practical way to improve network monitoring, support SOC workflows, and strengthen compliance evidence without forcing a premium-tool budget. It also fits naturally beside pentest, pen testing, and penetration testing services by helping validate findings and monitor for repeat activity after a report is delivered.

If you want to expand your security offerings without competing against your own clients, a channel-only partner model makes the most sense. That's especially true when you need affordable, fast, white label pentesting backed by certified testers and clean reporting for MSP, vCISO, GRC, and reseller relationships.

If you need a channel-only partner for affordable manual pentests, fast turnaround, and fully white-labeled delivery, talk to MSP Pentesting. Our team includes OSCP, CEH, and CREST certified pentesters, and we help MSPs, vCISOs, GRC firms, and resellers deliver high-quality penetration testing without adding delivery overhead or channel conflict.

.avif)

.png)

.png)

.png)